Forty Minutes, Eleven Tabs, No Answer

I spent forty minutes last month researching whether to switch our team’s project management tool.

Reading comparison articles, watching a demo, skimming three Reddit threads-at the end of it I had eleven open tabs and no clearer sense of what we should actually do.

Then, almost by accident, I typed the real question into an AI prompt. Not “compare Asana and Linear.” That was the research question, and it was the wrong one.

The actual question I needed was: “We’re a six-person team, our main failure mode is tasks getting lost in handoffs, and we’ve tried two tools already.

What should I be asking before choosing another?” The response took ninety seconds to read. I made the decision in ten minutes, and it held.

The distinction between those two approaches took me an embarrassingly long time to see.

Retrieval vs. Orientation

The Question Before the Search

Most people use AI the way they used search engines: to retrieve information they don’t already have. That’s the obvious use, and it’s not wrong.

The less obvious one-the one that actually changes how long things take-is using a prompt to surface the question you haven’t thought to ask yet.

The shift is from retrieval to orientation. Search finds what you already know to look for. A well-constructed prompt can map the relevant considerations, name which ones you’re probably overweighting, and locate where the real decision lives.

That reframing step used to require a trusted expert or several hours of reading. It still requires your judgment. The raw material now arrives in minutes.

A comprehensive guide for addressing the tax talent crisis

A labor shortage in tax is driving the need for a new skill set: one that blends technical tax knowledge with digital fluency.

Automation, AI and data-driven insights now define the role of tax professionals.

This new era of tax is not simply about adopting new tools, it’s about reshaping the skill set and mindset required to thrive in this field. Check out this guide for actionable insights into how to cultivate these skills with your team. See how advanced technologies can help bridge the tax tech gap to increase efficiency, ensure compliance, and drive better decision-making.

Why Faster Feels Wrong

We were trained to show our research. Arriving at a decision quickly, with help from something that isn’t a person, can feel like skipping steps-like you haven’t earned the answer.

Or maybe that’s just me. Either way, the discomfort is real, and it isn’t a reliable guide to what follows.

The Two-Question Filter

Before Opening a Browser

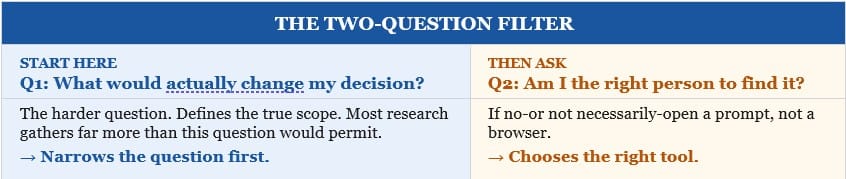

Before opening a browser, I now ask two questions. The first: what information would actually change my decision?

This is the harder one-it’s rarely asked, which is why most research gathers far more than it needs. Second: am I the right person to find that at all?

Answering no-even tentatively-is usually enough to open a prompt instead of a tab.

The prompt that worked wasn’t clever. It named the team size, the failure mode, the history.

Specificity separates a prompt that orientates from one that just retrieves-and that is precisely what the Two-Question Filter is designed to force.

That’s the whole filter. Two questions, asked before you open anything else.

AI Alone Can’t Run Revenue

Finance doesn’t run on “mostly right.” It runs on math.

In The Architecture Behind AI-Native Revenue Automation, Tabs’s CTO breaks down why LLMs alone aren’t enough—and what it actually takes to build audit-ready, AI-driven contract-to-cash systems for modern B2B teams.

Narrower Questions, Faster Answers

Most research tasks aren’t really research. They’re orientation-an attempt to feel less uncertain before making a call. This works well when a decision has defined scope.

For genuinely open-ended questions, though, a prompt is a starting point, not a replacement for slower thinking. That line is worth knowing.

The next time you feel yourself opening tabs, stop for thirty seconds. Ask what would actually change your decision. That question, answered honestly, is often the whole work.

“The quality of your output is constrained by the quality of your question, not the quantity of your search.”

References

Key sources cited in this issue:

[1] Mollick, E. Co-Intelligence: Living and Working with AI. Portfolio/Penguin, 2024. penguinrandomhouse.com

[2] White, J. et al. “A Prompt Pattern Catalog to Enhance Prompt Engineering.” arXiv:2302.11382, 2023. arxiv.org/abs/2302.11382

[3] Simon, H.A. The Sciences of the Artificial, 3rd ed. MIT Press, 1996. mitpress.mit.edu

[4] Dörner, D. The Logic of Failure. Metropolitan Books, 1996. hachettebookgroup.com